Building a scalable computer vision pipeline for Intelligent Transportation (ITS) using Kafka

Share the article

The adoption of pan-tilt-zoom (PTZ) CCTV cameras in intelligent transportation systems is on the rise, driven by declining costs and enhanced capabilities. In certain instances, roads spanning hundreds of kilometers are equipped with CCTV cameras placed every 200 to 500 meters, ensuring comprehensive road coverage.

While CCTV cameras provide an effective way for operators to monitor roads and rails, the growing number of PTZ cameras across transport networks has increased the work and cognitive burden for staff. How can the latest advancements in artificial intelligence, computer vision, and digital technology help reduce this burden?

Cameras have been in use on road and rail networks for several decades, being deployed in areas where it is necessary for operators to have a view of road conditions. In the past, when cameras were expensive and only a few could be purchased, they would be strategically placed in high-risk areas such as junctions, slip roads, toll booths, and tunnels for optimum coverage.

These legacy cameras were normally analog, low-resolution systems fixed in position with no zoom capabilities or motors for movement, which meant operators had limited visibility of roads. If an incident happened outside of the camera’s field of vision (FOV), then there little an operator could do apart from waiting for a patrol to arrive on the scene and assess the situation.

Fast forward to today, and many stretches of the road have multiple sophisticated HD cameras that allow operators to monitor and understand what is happening in real time, as well as prioritize the allocation of resources. These cameras are no longer fixed in position but offer pan-tilt-zoom capabilities, which means they can rotate 360 degrees, move up and down, and zoom in and out. They also leverage 4G and 5G technologies to negate the need for expensive networking infrastructure such as fiber optics.

Computer Vision Use Cases for Intelligent Transport Systems (ITS)

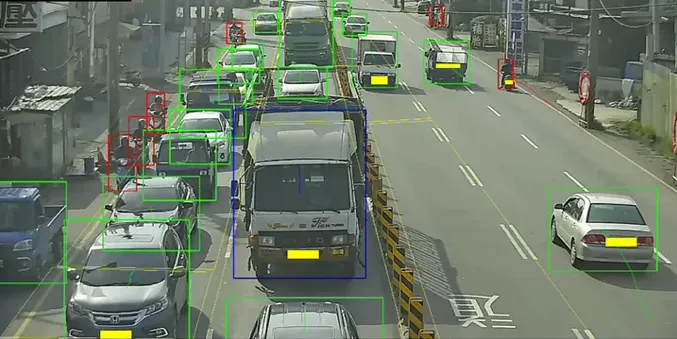

Computer vision is one of the most valuable data sources for intelligent transport systems and digital twins. It provides rich and relatively accurate insights for many domains and use cases, including:

- Event detection. Detecting events in real-time, such as hazards, stopped vehicles, wrong-way driving, and pedestrians or animals on the road

- Risk prediction. Detecting near misses such as harsh braking or antisocial driving

- Weather data. Fog, rain, wind, ice, or snow

- Queue length and junction optimization

- License plate recognition

- Traffic flow. Vehicles flow, speed, or classification

- Security and vandalism

- GIS. Identifying the location and condition of assets such as signs, road markings, potholes and crash barriers

As much as computer vision is compelling, it is also highly challenging:

- Cameras perform poorly at night time unless they have special IR illumination

- There are many brands and models for cameras, with different protocols, compression types, interfaces, lens types and capabilities.

- Some places still use analog cameras for monitoring while others use sophisticated CCTV cameras with edge processing capabilities

- Processing of video data is complex and expensive

- Accuracy declines in poor weather conditions such as fog, rain, snow, and strong wind.

- PTZ cameras also present a significant challenge for computer vision, which will be discussed later

Computer vision pipelines

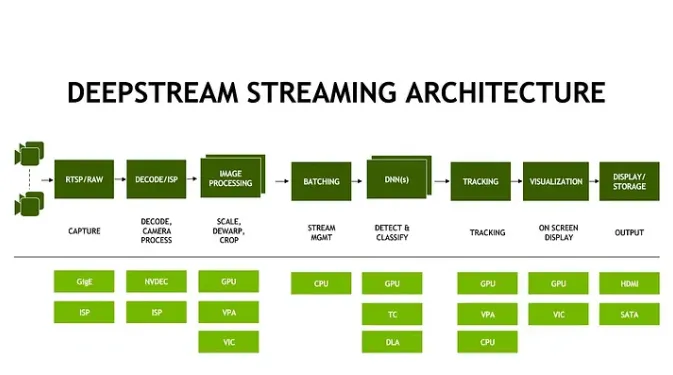

Computer vision pipelines for intelligent transport normally include some or all of the following steps:

- Integrating with the camera feed

- Pre-processing the video: scaling resolution or frames per second (FPS), noise correction and color correction

- Decoding the video from H264 to raw images

- Masking the video to remove areas that are not relevant

- Detecting features, objects or segmented regions (2D or 3D) in each frame, including background and foreground separation

- Classifying objects (semantic labeling)

- Geolocating objects from image space to GIS space

- Tracking the objects using feature matching and dynamic models

- Analyzing the scene: trajectory analysis, rule application and anomaly detection

- Extracting events and insights and raising alerts

- Adding overlays to the video and encoding it back into multiple resolutions to simplify viewing

With all of these steps, it’s important to note that AI and computer vision is still very limited and don’t match the depth and sophistication of the human brain.

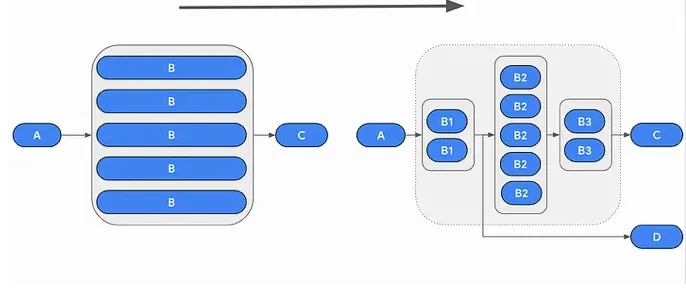

Generally, there are a few approaches to implementing computer vision pipelines:

Tenancy model

- Pipeline per camera (Single tenant). Each camera has a dedicated pipeline that includes all of these steps

- Pipeline across many cameras (Multi-tenant). One pipeline handles the processing for many cameras and the compute resources are shared across all cameras

Rigid vs Agile

- Rigid pipeline: Harder to add functionality as any change requires full testing and is limited by the CPU and Memory that is allocated to the pipeline

- Agile pipeline: Easier to add new functionality and local changes do not create ripple effects

Rigid single-tenant pipelines are most common in edge devices, while agile multi-tenant architectures are more popular in cloud or data center deployments, where each step can be scaled with the appropriate resources.

Hybrid approaches are also possible, where some steps are single-tenant while others are shared, or where some processing occurs on premises while the remainder runs in the cloud.

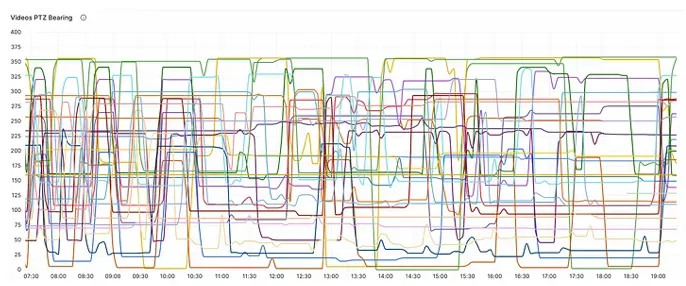

The PTZ Challenge

Transport operators almost always prefer PTZ CCTV cameras over static ones. PTZ cameras increase coverage and allow operators to zoom in on events miles away, meaning fewer cameras may need to be installed.

However, for computer vision purposes, PTZ is extremely challenging.

For example, with a fixed camera image it is possible to “mask” or manually label areas and objects in the scene—such as a junction, bus stop, carriageway or bridge—to help computer vision algorithms focus on specific locations when detecting and classifying events.

With a moving PTZ camera, this becomes much more difficult because the field of view is constantly changing, meaning the baseline state of the algorithm must also change.

Another example is traffic speed measurement. Speed is calculated by measuring the time it takes a vehicle to pass a known fixed distance. If the PTZ parameters of the camera change, those distances are no longer accurate, which can significantly distort calculated speeds.

Finally, geolocating an event within image space is already challenging with static cameras and typically requires manual calibration. With PTZ cameras that zoom and rotate freely—and may have unknown lens distortion and shifting perspective—this becomes significantly more complex.

PTZ Opportunities

PTZ also presents opportunities for computer vision technology. For example, the system can automatically control cameras, directing them toward high-risk areas or initiating repetitive scans to actively detect hazards.

Computer Vision Pipelines over Kafka

Before we discuss architecture concepts for implementing computer vision pipelines, we need to map the technical requirements.

Computer Vision Pipelines Requirements

- Scalable.Can scale to support thousands cameras

- Realtime. The entire processing needs to complete within seconds

- Resilient. Highly available system

- Open. Support for major CCTV protocols, such as RTSP, H264,MJPEG, and ONVIF

- Bypass. If some cameras already include some steps (i.e. object detections), they can bypass these steps and push the data to the middle of the pipeline

- Decoupled. Clear separation of concerns and functionality. Individual components can be updated without affecting the entire system. For example, let’s say the pipeline uses YoloV1 for object detection, upgrading the object detection step to use YoloV10 would not create a shock wave that affects the entire system.

- Modular. Easy to add new processing capabilities and models

- FAIR. Data should be findable, accessible, interoperable, and reusable

- Affordable. Use economy-of-scale techniques. For example use the right hardware for the right task: GPU (Nvidia TensorRT, CUDA)for AI models, but other tasks might be more efficient to run on a CPU.Auto-scaling: scale variable load tasks like for instance the tracker, up onrush hour and down on night time. Choose the right video resolution and FPS to achieve a balance between performance and cost.

- Upgradeable. No downtime upgrade of core and services

Kafka

Kafka is an open-source, real-time, mature, resilient and scalable streaming platform used by many technology companies to stream events and data between services. Although complex to tune, it is one of the few solutions capable of meeting the requirements outlined above.

Solution Concepts

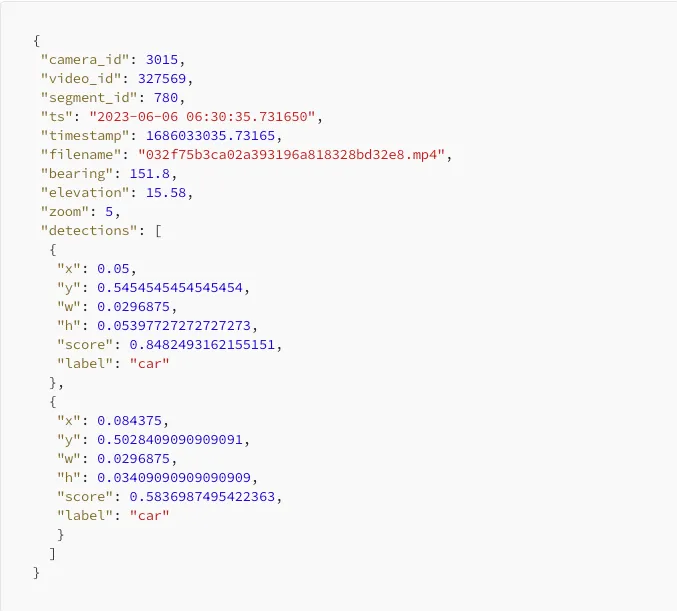

Kafka should serve as the system’s source of truth. All data—raw, processed and enriched—should first be available in Kafka and then routed to the appropriate services.

Video and images: Although Kafka can support video and image data, storing large multimedia files directly in Kafka is difficult to manage. A more practical approach is to store them in an external shared file system and reference them within Kafka messages.

Microservice architecture: Tasks should be implemented as stateless or stateful Dockerized microservices orchestrated using frameworks such as Kubernetes.

Processing should be broken into smaller modular steps, each handled by its own agent. Complex processing pipelines create several issues: intermediate results cannot be reused, observability becomes limited, debugging becomes harder and scaling becomes inefficient.

In addition to computer vision, Kafka can stream data from other sources such as sensors, weather services, radars and connected vehicles. These data sources can either assist computer vision tasks or be fused with them to produce more complete, accurate and actionable insights.

Kafka partitioning and replication factors should be carefully configured to ensure scalability and resilience. For example, Kafka topics can be partitioned geographically or by camera.

Kafka lag monitoring should be used to guarantee service levels and end-to-end latency. Lag metrics can also be used to automatically scale services.

Schema management tools should be used to manage schemas across the entire system for both JSON and Avro formats.

A/B Testing

Kafka simplifies the testing of new features and capabilities. Bringing up a parallel branch of certain tasks and comparing the results in real time between the main branch and the test branch becomes a simple and achievable task.

Summary

Scaling computer vision pipelines to handle thousands of PTZ CCTV cameras is a complex engineering task and requires skills, planning, and good experience in both software architecture and computer vision. When combined the right way as part of a full digital twin system for intelligent transportation, this can lead to a powerful and sophisticated system, that is getting closer to what operators expect these days, after listening for years to all the promises of big data, AI, cloud technologies.

.svg)

.svg)

.webp)

%201.png)